1.5 Degrees and the 97% Consensus – or perhaps not.

For the past several millennia during which human civilization has been marked by general, but not consistent growth, there has been one constant aspect which has prevailed across most cultures: an unrelenting forward push, despite any obstacle, to achieve improved living conditions and greater longevity; i.e., societal growth. Even in periods we can now view with hindsight as having been a back-step on the treadmill of progress had been implemented with the aim of betterment: better housing, better food, better clothing, better heating, better sanitation, better transportation, better medical care, better communication, better wages, better environment.

Before the Industrial Revolution, no single society ever strove to accomplish all of those betterments at any single time. Progress during any particular era, therefore, tended to occur piece-meal: some here, some there, some now, some later, across the globe, with trade ensuring that a good idea somewhere would inevitably lead to the same idea with improvements everywhere else. That was until there was a fundamental change to civilization.

That fundamental change to civilization was the discovery of sources of usable, transportable, scalable, concentrated energy in the form of fossil fuels. Modern civilization is the result of one thing and one thing only: the ability to harness and apply energy in a form which multiplied, by many, many times, the power available to do work, as compared with that which had been available from human muscles, draught animals and the burning of wood. The vast energy stored in fossil fuels derives from collected solar energy stored in organic matter from the corpses of past living organisms, chemically altered by and stored within the Earth (ref: Concentrate Please, on this blog).

Before the first steam engine in 1698, the first actually working steam engine (1712), the first steam boat (1807) and steam train (1814), people, news, goods, whatever, traveled and were transported at the same rate as they had been in ancient Babylon – the speed of a donkey cart – and the manufacture of all goods and construction of all structures and infrastructure of civilization was by human manual labor with some aid from animals and burning wood. Buildings were made of stone or wood; iron and steel were used for rare, expensive objects, or for absolute necessities (tools, plow shares, horseshoes, weapons…) and only sparingly then. The application of the concentrated energy available in coal turned a village blacksmith, pounding out a dozen horseshoes by hand over several hours, into a worker whose same effort made sky-scrapers and steam engines not only possible, but affordable.

In the absence of the use of the concentrated energy in fossil fuels, the greatest minds in history (think Leonardo, Archimedes, Parcelsus) were unable to materially improve their own lives (never mind those of the multitudes) despite having ideas which remain a wonder and valid to this day. That was because they had no means to convert those ideas into material reality. The idea to extract more iron ore from deep mines by pumping the water out remained just an idea and out of reach until Newcomen’s stream engine (1712) made it possible to lift water higher than the 32 feet limit imposed by the weight of the atmosphere acting against rising water pushed by a manually operated pump – the idea of the pump itself did not change since earlier times – more power than manpower, or horsepower, was needed to do the heavy lifting.

There are dozens of other, similar examples, each of which contributed in its own way to the democratization of material goods. That, after all, is what makes modern civilization: the ability of nearly all members of a society to possess those goods which provide for a standard of living which, perhaps not as luxurious, opulent or pampered as the few, but at least of the same calibre in terms of having enough food, adequate clothing, and the warmth and shelter to shield from the vagaries of the weather.

The one constant throughout history is that all civilizations, everywhere and nearly everywhen, strove to improve. They strove for progress and they strove to grow; to add more protections, more agricultural potential, better abilities to manufacture clothes and the necessary implements to survive. Growth comes when there are more resources, being available to more people who will exploit the small increments of additional time available to them resulting from using new labor-saving devices derived from the new ideas of others, to develop and use more resources to provide to more people…

More resources, to serve the needs of more people can ONLY happen with increased application of power: more oxen to plow more fields, or to turn more mine shafts; more water wheels to turn more mills and more food for the additional people. And more power comes from ONLY one source: MORE ENERGY. Before fossil fuels, that meant more cleared land to provide more grazing for more draught animals, which required more energy for that clearing process…

It was the combination of great minds, freed to create virtually anything by the near-limitless energy supply available from fossil fuels, that made the modern world possible. One without the other, and it could not have happened; the Industrial Revolution and the resulting modern world would have been impossible without both. And the modern world is STILL impossible without fossil fuels which will continue to be true for some time to come, unless one other strategy is adopted (well, expanded, actually).

NO society ever experienced growth using less energy than previously. Even with modern improvements in energy efficiency, the best that could happen with no new energy input to create more power is societal stasis. The societal urge to improve, to progress, to grow, was the norm from pre-history, through pre-industrial times, continuing throughout the industrial revolution right up until the present. Well…almost.

Some time around or about 2018, however, western civilization became moribund and now stands at the precipice of decline, having become the first civilization to willfully seek its own degradation. Without belaboring the point made above and heaping on more and more examples, and recalling that progress and growth are not possible for a population without using more energy, the premise that we can and must abandon fossil fuels in favor of wind turbines and solar panels is vacant and, quite frankly, a lie on both counts: that we can and that we must. Those complementary lies are propagated most forcibly and vehemently by the United Nations International panel on Climate Change and its component government representatives to the Congress of Parties – a climate-centered orgy of platitudes convened every few years to virtue signal that everyone cares about the planet and all its myriad life forms, down to tape worms and germs, more than they care about humans. Let’s tackle the lies in turn.

First, the “we can” charade. Solar and wind are dilute sources of energy, as explained in detail in Concentrate Please lower down on the blog home page and cannot produce the power we need to maintain where we are now – never mind growing. They simply cannot provide that kind of scalable, reliable, transportable power, and the raw materials to transition to the scale of such energy sources are simply not available. Besides, the amount of fossil fuels needed to build out that kind of new infrastructure just about equals the amount of energy which would be burned by just leaving civilization run as it is currently.

We can NOT continue our civilization with the more dilute energy provided by wind and solar – PERIOD! At best, growth would halt and stasis would ensue. But what does it mean to NOT grow as a society?

It means that there are no new consumers for goods and services, so for any one business to grow, some other business must shrink. It means that the standard of living must decline because birth rates at the outset of the period of energy stagnation or reduction will result in more people living than can be supported in the same manner. It means that ultimately life expectancy will decrease because the limited resources which can be produced from a static or declining energy sector will not be able to feed and clothe and medicate more people than were alive at the outset of the elimination of fossil fuels. It means an increasing death rate and ultimately population decreases, which must then result in the decline of the society in general. In other words: The entire world becomes the third world.

That’s the best outcome we could expect: a self-inflicted re-visitation of the Dark Ages.

Now for the “we must” canard, but before we go there, I want to reveal the one true alternative to fossil fuels – nuclear energy. We can run our society and even grow with nuclear, using petroleum or coal as feedstock for manufacturing and similar applications. I bring this up now because I am not painting a gloom and doom scenario here. I am simply highlighting the irrationality of where we stand at the moment. But now, I want to focus on the “we must” argument for the remainder of this post, because therein lie the lies.

For 33 years (at the time of this writing) the western industrial/scientific complex has been propagating a multi-partite fallacy that:

- The planet is warming catastrophically as a result of certain human behaviors – specifically, the burning of fossil fuels;

- Thanks to powerful computers, we can project what the climate will be like 100 years from now so we know the continued burning of fossil fuels spells doom for humanity and the Earth;

- If we eliminate just one human behavior, the burning of fossil fuels, we can control the climate for as long as we like.

(HT to Steve Koonin, Former Undersecretary for Science in the Obama Administration, Oct. 2021)

To take those in turn:

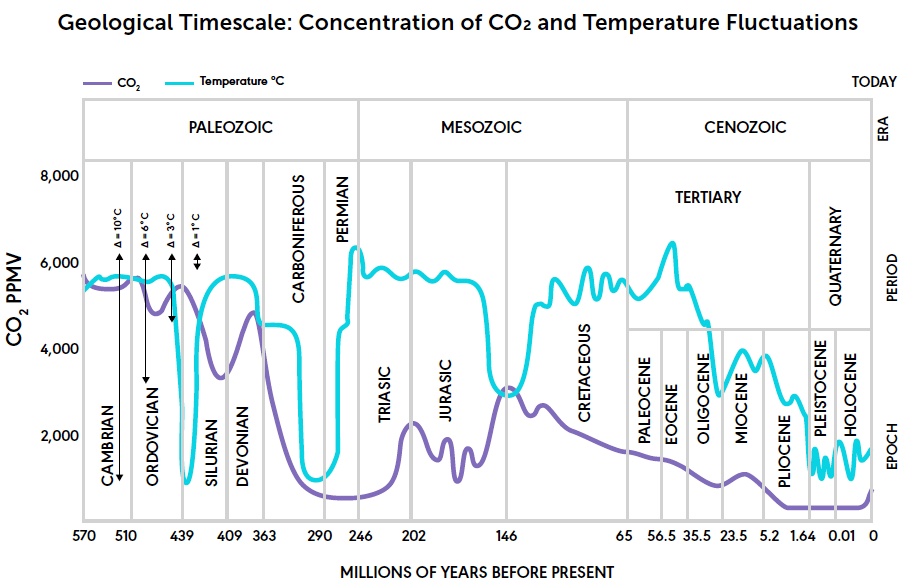

Number 1 is simply not true and it is not borne out by the geological record. In fact, geology reveals that we currently live in one of the colder, nay, one of the coldest periods of Earth history – no arguments there (the blue line in the diagram to the right is temperature. The purple line is CO2 concentration – see the correlation? 😉

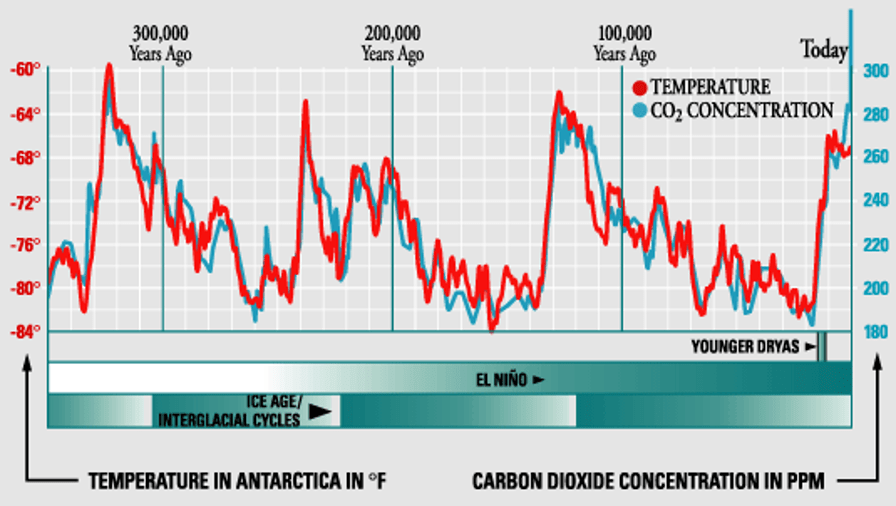

Many catastrophists trot out the correlation between atmospheric CO2 and temperature over the previous 1 million years or so and show there is a positive correlation between the two. Such is true, as seen in the graph.

What you are generally NOT shown is the expanded view of those same data which I show in the graph to the right. Of particular note, when one zooms in on the data, it is readily apparent that the temperature unerringly changes first, followed at an average of 800 years later by an increase in the concentration of CO2

Why is this, you might ask. It is caused by a simple property of liquids, which can hold more dissolved gas when they are cold than they can when they are warm. It’s why beer goes flat as it warms – the dissolved CO2 (the bubbles) is lost to the air as the beer’s temperature increases. On Earth, when global temperatures decrease, the oceans absorb more CO2 from the atmosphere and when Earth warms the oceans releases it – with a lag time of about 800 years, on average. By the way, a significant contribution to the atmospheric CO2 now being measured is this very process. The oceans are NOT warmed by the air – they are warmed by the sun (another post coming soon on this) which has been going on since the end of the Little Ice Age.

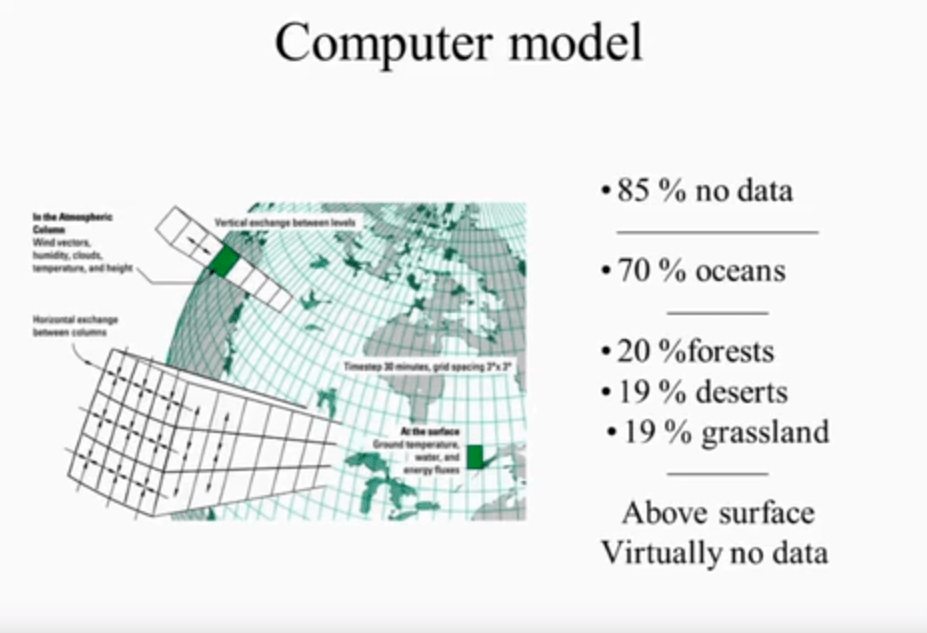

Number 2 is an outlandish canard. The powerful computers have not even been able to back-predict the climate of the past century during which we can input the starting and ending conditions. Hell, those computers are the same ones used to predict the weather, which is not accurate form more than a couple days;

Number 3 requires just a little more response than will fit in a simple paragraph.

Since around 2010 it became possible to convince many in the general population of the perceived veracity of Item No 3 by relying on what is known as the argument from consensus (argumentum ad populum) and the argument from authority (argumentum ad verecundian) . The purveyors of those arguments have been able to establish a death grip (literally) on society based on a single “scientific” paper published by a researcher by the name of Cook from the University of NSW in Australia and his cohorts, who claimed that 97% of all climate scientists fundamentally agree with the three points, above. In fact, however, when Cook’s data were examined by other scientists (the most critical aspect of the scientific method is that findings must be independently reproducible so whatever is published will be checked by others before it can be considered a valuable contribution to science) it was determined that the number of scientists who actually agree with those points is 0.3%. It has also been determined, using archival data from the intranet, that Cook and his colleagues purposefully doctored the data to establish “climate scare science” as the accepted norm in western governments. Call it collusion, conspiracy, whatever you will, but they succeeded – think about Obama’s various State of the Union addresses in which he not only claimed that ‘carbon pollution’ is wrecking the Earth but that 97% of all scientists agree with that. The 97% canard has been elevated and is now reported by the U.N. as 99.9% of all scientists. The universe of scientists – Earth and atmospheric scientists in particular – does not constitute a very large population. So, considering that the Petition Project has garnered over 31,000 signatories who are scientists and engineers with relevant expertise, the 99.9% claim falls flat before it can even be launched.

In fact, as will be revealed in upcoming posts here at The Terrane, there is absolutely no rationale and no scientific reason that “we must” curtail the use of fossil fuels as a means to control the climate. Such control is not possible to us, and is certainly not even in the realms of the unreality typical of science fiction. It is beyond hubris to contend that in a vast atmosphere, in which multiple gasses all play a role in climatic processes and in which an abundant gas (water vapor) is responsible for up to 98% of the so-called greenhouse warming (another pending blog post), one trace gas (CO2 at 0.04%) is the control knob for not only atmospheric temperature, but also extreme weather, long-term drought/flood cycles, and even forest fires.

It is this kind of scare mongering which has convinced a significant proportion of the population of western societies that a lesser standard of living is better than not living at all, which is why many are now on board with accepting, no embracing, even clambering for, dilute forms of energy which would necessarily result in a population decline and, consequently, civilization declines. I almost can’t blame the average person on the street who has neither the background, nor even the basic wherewithal to parse the rhetoric and realize that they are being had and that this emperor not only has absolutely no clothes on, but that his skeleton is also wholly devoid of any flesh. But in fact I can blame them. There has been no dearth of clarion alerts heralded by what we like to call ourselves, Climate Realists, warning that there is no substance to the climate scare and offering to explain why. People don’t want to listen – it is easier to take a positive assertion and be scared than it is to listen to a necessarily-detailed rebuttal (see Jocks for Rocks here at The Terrane for an explanation).

How can I say that? One point to make and then onward: There is not a single piece of empirical evidence from real world observations in which CO2 emitted specifically from the use of fossil fuels has been responsible for any actual warming of the atmosphere – let alone causing tornadoes and increased volcanic eruptions (yes, those are real claims). NOT ONE PIECE OF EVIDENCE – NOT ONE!!!!! I challenge anyone to produce just one, and I will recant and the entire community of scientists who don’t subscribe to the Anthropogenic Global Warming hypothesis will fall in line with the U.N. But besides that, the volume of CO2 in the atmosphere emitted by humans is only a fraction (about 3% of the 400 ppm, or 12 ppm) of the total CO2 which is there naturally as a result mostly of ocean degassing. Considering the average 800-year time lag between Earth warming and CO2 increases, the rising CO2 now likely initiated in the Medieval Warm Period. Despite this factual situation, somehow in the minds of us and our governments, the vastly lesser amount that we emit is ostensibly what causes the warming.

To all of the peddlers of climate gloom, I have one question, which, if they can answer in a manner which passes scientific muster would gain them some respect and make their hypothesis of CO2 – caused apocalypse plausible. Here it is:

What is the ideal global mean surface temperature of the Earth?

This is a critical point, because the U.N. IPCC has set an arbitrary (yes, it was 100% made up) ‘limit’ to the amount of global warming which would be acceptable (acceptable to whom and compared to what?) and that limit is 1.5° C above “pre-industrial” times, established as a date of ~1750 C.E.

In establishing a target of 1.5° C as the “allowable” temperature increase ostensibly resulting from fossil fuel CO2 emissions, the U.N. has issued a decree based on five wholly uncorroborated assumptions:

1. That human emissions of the trace concentration of CO2 is actually causing measurable atmospheric warming (such has never been measured or documented other than in computer models) and that CO2 is the controlling factor by which Earth’s total climate is determined. It also means that all of the factors which operated over geologic time to change the climate before the industrial age no longer play a role;

2. That the amount and rate of warming measured since the end of the Little Ice Age (0.8 degrees C) are outside the range of natural variability – such is patently not the case;

3. That the modest warming observed since the end of the LIA is catastrophic to both civilization and the planet as a whole. In fact:

•Earth is now 14% greener than it was 50 years ago;

•deserts are shrinking;

•plants are thriving;

•crop yields are increasing around the globe

•global starvation is at the lowest percentage ever

All because of the increase of available plant food: Atmospheric CO2

4. That there is such a thing in reality as a global mean temperature and that the measurements available reflect that mean. Currently, 85% of the Earth’s surface is not covered by the global temperature measurement network and the temperatures from that lion’s share of the surface area and overlying atmosphere are entered into the global mean temperature equations using extrapolation from other stations; i.e., it is fabricated by a mathematical averaging algorithm by averaging over distances of thousands of miles and land/sea areas of millions of square miles. Moreover, in claims that such-and-such year has been the hottest on record, the increment by which such exceedances are based are measured in hundredths of a degree (0.01), whereas the accuracy of the measurements is on the order of tenths of a degree (0.01). When a error margin (tenths) is larger than the parameter being assessed (hundredths), the result is worthless and meaningless;

5. That the starting point of the targeted measurement period (1750) is at or very near the ideal; i.e., what the average annual surface temperature of the Earth SHOULD BE.

So, by setting the starting temperature at what it was in 1750, the U.N. presents its position that the “ideal” temperature of Earth is about 1.3° C lower than today, or a (mythical) ideal global mean temperature of ~ 13.7 degrees C, as opposed to the current 15 degrees.

What the climate crisis gurus at the IPCC conveniently neglect to note in making that illogical and misleading presumption is that in 1750, the entire Earth was in the depths of a 500-year-long climate cycle known as The Little Ice Age (~1380-1880), which has been the coldest time in the past 8,000 years and almost the coldest since the end of the last glacial advance (see Anthrop-obscene on the home page). The LIA was much colder even than now, which is COLD compared to much of geologic history. The painting above is of the Thames River around 1700. It was consistently so cold that London held annual “Frost Fairs” on the Thames. The picture to the right depicts a Frost Fair on the Thames – note St. Paul’s Cathedral in the background, placing the date of this painting to circa 1700.

Prior to the LIA, the Earth was in a warmer period known as the Medieval Climate Optimum which was approximately the same temperature as it is right now (2022). Before that were the Dark Ages (another cold period) with the Roman Warm Period before that (warmer than right now) and before that the Minoan Warm Period 3,500 years ago – the temperature peak of the Holocene Interglacial. Imagine that! All of that warming and cooling occurred without the burning of ANY fossil fuels…

With all that variability (ref: chart above), how did the U.N. determine that a time in the depths of the LIA (the very long, very low part of the graph above, just before the red line begins) is the “ideal” mean for Earth?

Why didn’t the U.N. choose the ideal temperature of Earth which was prevalent during either the Roman or Medieval warm periods, or even the Minoan warm period (also as warm as now)? Rather, they chose a starting point which stacks the deck because it was during the coldest time in the past 8,000 years. All of these temperature variations and the warm-cold cycles were common knowledge among Earth scientists until it became a threat to the the catastrophe narrative. Starting in the 1990s, when the UN assessment reports took on the air of certainty that humans were the sole cause of warming, climate history has been re-written using such discredited shenanigans as the infamous Hockey Stick farce.

When considering whether our current climate represents an emergency or is just normal depends to the most significant extent on where you set the ‘zero-point’ for the target range. If the ideal surface temperature of Earth is that of the Medieval Climate Optimum, which had a temperature similar to that of today, then the Earth could warm up quite a bit from the current temperature and still be within 1.5 degrees C of that earlier time. What the U.N. said was that the base line temperature should be that of pre-industrial times. But wasn’t the Medieval Climate Optimum (MCO) also during pre-industrial times?And, by the way, Earth began to warm up from the LIA long before humans had emitted enough CO2 to make any difference – it ended in about 1880 and the U.N. says that serious anthropogenic global warming began around 1958. Of the nearly 1.5 C degrees of warming since the end of the LIA, approximately half was before 1930.

If someone were able to come up with an answer to the question of what the ideal mean surface temperature of Earth should be, the follow up questions would then be:

- How far off are we currently from that ideal? As we don’t know the ideal, there is absolutely no way to ascertain if we are within or outside of a desirable, or even a “safe” temperature;

- How does having established an “idea temperature” account for the fact that life, and the Earth itself, have not only survived, but thrived through all of the widely varying climate ‘extremes’ which have deviated more from the ‘ideal’ over the aeons of geologic time than the “deviation” over the past few decades? Some apologists for AGW try desperately, annually, to ascribe past so-called mass extinctions to climate change and then try to blame the ostensible climate change on CO2. In that way, they attempt to justify the CO2 panic they have manufactured for the present. Sadly for them, such correlations have proven outside the realm of possibility and their speculations remain just that – speculations.

And for the record, no-one, not even the most outlandishly brazen prognosticator of climate Armageddon, has been brash enough to answer the very first question as to what is the ideal temperature for Earth. Without an answer to that, one cannot even ask the follow up questions.

So, if we start with a temperature of ~13.7 C at around 1750, then we are already 1.3 C toward the 1.5 C arbitrarily-established, fiction-based ideal temperature. But, if we were to start in 1300 C.E., or even 400 C.E., then we have almost 1.5 C to go still before the U.N.’s made up max limit would be reached.

It all comes down to what the temperature was when you started your count up. But even that comes down to the main question: What is the ideal temperature for Earth?

There is an answer:

THERE IS NO IDEAL GLOBAL MEAN SURFACE TEMPERATURE!!!

Earth has been both a hot house and a big snowball within the enormity of geologic time. We humans currently thrive in climates as varied as artic and equatorial. The whole farcical exercise of the mythological ideal temperature and the allowable 1.5 degree exceedance of ….something undefined… is nothing more than a shell game.

Pingback: Interdisciplinary…Ineffectual | In Suspect Terrane